Highlight

Successful together – our valantic Team.

Meet the people who bring passion and accountability to driving success at valantic.

Get to know usSupply Chain Analytics

IOT, Big Data, and artificial intelligence are changing nearly all aspects of our lives. Data is processed in real time and critical forecasts are adjusted and expanded continuously based on AI. This is why supply chain expectations keep increasing with regard to efficiency and service level. The challenge for companies is to be able to keep up in the long term, but also to rely quickly on proven methods.

IoT, Big Data, and artificial intelligence are now transforming almost every aspect of our lives. Data is processed in real time, and crucial forecasts based on AI are continuously adjusted and expanded. This raises expectations for supply chain efficiency and service levels. The entrepreneur’s challenge is to keep up in the long term but also to quickly revert to tried-and-tested methods when necessary.

Make decisions based on all available facts. Evaluate your data with state-of-the-art statistical methods and make decisions with confidence. Get a clear picture of all the opportunities and risks. Detect weak points and factors that will make your strategy more successful.

Supply Chain Analytics supports your company on several levels

Your advantages

Continuous data concept for comprehensive planning process

Data analysis for definition of an appropriate forecasting strategy

Tool-independent advising for definition of the target image

Implementation assistance in the form of project marketing and change management

State-of-the-art approaches in AI, machine learning, forecasting, clustering, pattern detection, supply chain simulations

Quick implementation based on best-practice templates and expert knowledge

Very user-friendly thanks to various tools

Modular structure enables step-by-step optimization of the supply chain

Our approach

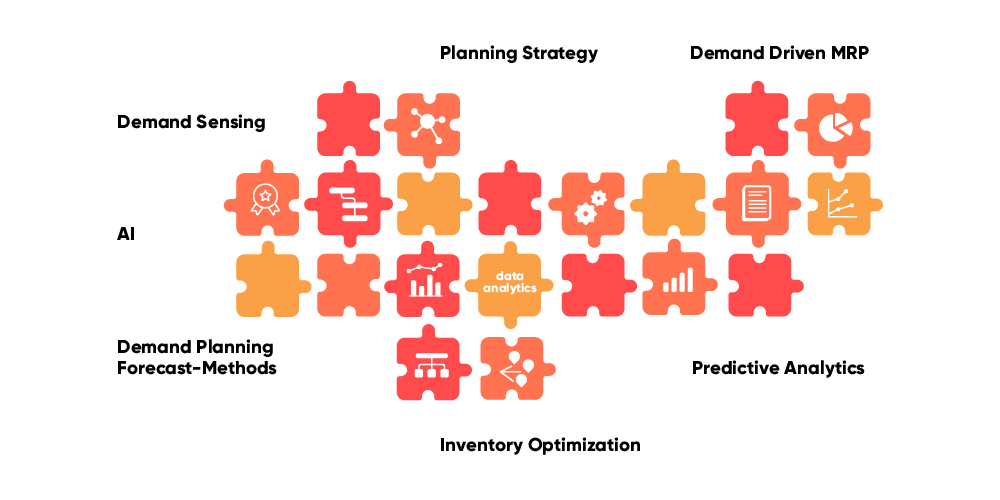

Our goal is to connect isolated solutions in a closed, efficient set-up.

We consider what's better: to use the latest and sometimes difficult methods in individual areas or to pursue an integrated approach using proven methods that are easy to implement.

In the end, what counts is that everything is right from beginning to end and that the results are used efficiently and in the right place.

It is important to us that our experts are not only among the best in data science, but they can also demonstrate sound experience in the supply chain management sector.

Only someone who truly understands agile project management and interdisciplinary approaches and ways of thinking can digitalize a complex value chain.

The use of Artificial intelligence (AI) in the field of supply chain analytics

Artificial intelligence (AI) is a quickly expanding field, that has a wide variety of applications in the field of supply chain analytics.

That’s why we want to start with an overview in order to assign the most important AI methods to specific applications in the supply chain. Here, in some cases there is no 1:1 assignment since sometimes the most successful methods can be used for a whole range of different applications.

Predictive methods:

These methods help predict particular variables in the supply chain. This can involve forecasts or, with demand sensing, for example, customers’ short-term order behavior. Used here are neuronal networks, gradient boosting of decision trees, and also classic forecast methods such as ARIMA, exponential smoothing, and methods such as linear regression.

Classification methods:

In contrast to prediction methods, here you’re making a statement about whether a particular property can be assigned to a data record. A typical example is object detection, where the objects are detected in a picture. Another application in the supply chain is the assignment of products to particular families based on their characteristic properties. Furthermore, properties can be detected in time series, for example, the seasonality of a linear trend. The analysis of texts is another important use case, for it makes searching for previous and future relationships easier.

Pattern detection:

This method helps detect commonalities from a multitude of products. In contrast to classification, the properties of the class do not exist here. It’s the task of the algorithm to define the different groups. Some methods are also in a position to put a product cluster into hierarchical sequence and to assign the appropriate data records to these groups. One of the most common cluster methods is the K-means algorithm.

Optimization:

This is a completely independent field, one to which simulations also belong. The algorithm’s goal is to find solutions based on predefined boundary conditions. For example, these can be delivery times and production capacities in order to optimize a production plan; however, truck routes also serve to determine an optimal delivery plan. The algorithm then calculates a multitude of solutions and tries to prioritize the best solution.

A large number of the AI methods mentioned are used in the context of supply chain analytics consulting. We can quickly transfer and scale methods and models that have proven themselves in the pilot project to the productive area within the framework of a cloud application.

AI methods in the field of supply chain analytics

A basic property of most AI methods is that there is an underlying mathematical model that can be parameterized. Parameters are, for example, the activation factors in a neuronal network, but also parameters for the statistical forecast (e.g. alpha and beta in second-order exponential smoothing). Another basic requirement for all AI methods is that the quality of the parameters has to be proven using an error function. The model can be optimized based on these two conditions. This can be done with training or, in the simplest case, with an analytical approach (the solution of an equation).

For it’s clear: AI is always only as good as the data that underlies it. This applies not just to the quality of the data, but also to the comprehensive selection of data records. Only if the right data records are used can the model be optimized correctly.